Explore how NVIDIA, AMD and Qualcomm are battling in the fast-growing AI chip market — their strengths, weaknesses, market outlook & who will lead by 2030.

🧭 AI Chip Race 2025: Nvidia vs AMD vs Qualcomm – Who’s Leading the Future?

Artificial Intelligence has become the new electricity — powering everything from chatbots and autonomous cars to robotics and creative tools. But at the core of this revolution lies a powerful engine — AI chips.

As of 2025, three giants are battling to dominate this trillion-dollar battlefield: Nvidia, AMD, and Qualcomm.

Let’s explore how each company is shaping the AI hardware race — and who I personally believe will come out on top.

⚙️ The AI Chip Revolution: Why It Matters

Before diving into the competition, let’s understand why AI chips are so crucial.

AI models like GPT-5, Claude 4.5, and Gemini 2.0 require massive processing power. Traditional CPUs can’t handle this efficiently.

That’s where GPUs, NPUs (Neural Processing Units), and custom AI accelerators come in — designed to process millions of operations simultaneously.

From data centers to edge devices, the demand for AI chips has exploded. According to industry reports, the global AI chip market is expected to surpass $250 billion by 2030, and whoever leads in 2025 will shape that future.

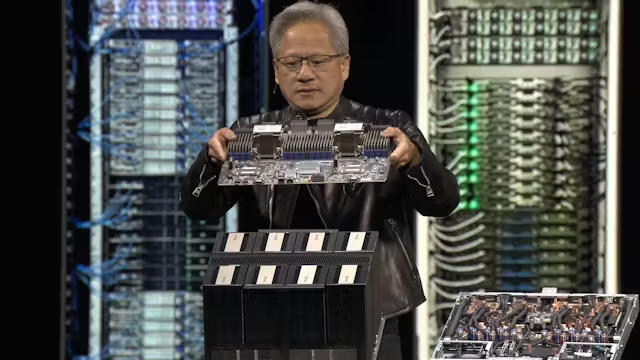

🟩 Nvidia – Still the King of AI Silicon

No discussion about AI chips is complete without Nvidia. The company has long dominated the GPU industry, and it continues to set benchmarks for AI computing.

🔋 Nvidia’s 2025 Flagship: Blackwell Architecture

In 2025, Nvidia launched its Blackwell GPUs, succeeding the H100 and A100 series.

These chips are built to handle large-scale AI models, offering:

- 2x faster performance than Hopper generation

- Massive energy efficiency improvements

- Integration with NVLink and Grace CPU for faster data throughput

Blackwell GPUs power systems like ChatGPT, Gemini, and Anthropic Claude, giving Nvidia a firm grip on the AI data center market.

💰 Market Dominance

Nvidia controls nearly 80% of the AI chip market for training large models. Its ecosystem — including CUDA software, TensorRT, and DGX servers — keeps developers locked in.

While competitors are catching up in hardware, Nvidia’s software advantage gives it unmatched dominance.

🧩 Where Nvidia Leads

- Best-in-class AI training performance

- Deep integration with major AI labs

- Complete ecosystem (hardware + software)

⚠️ Challenges

- High pricing (limiting smaller AI startups)

- Overreliance on data center GPUs

- Competitors focusing on cost-efficient alternatives

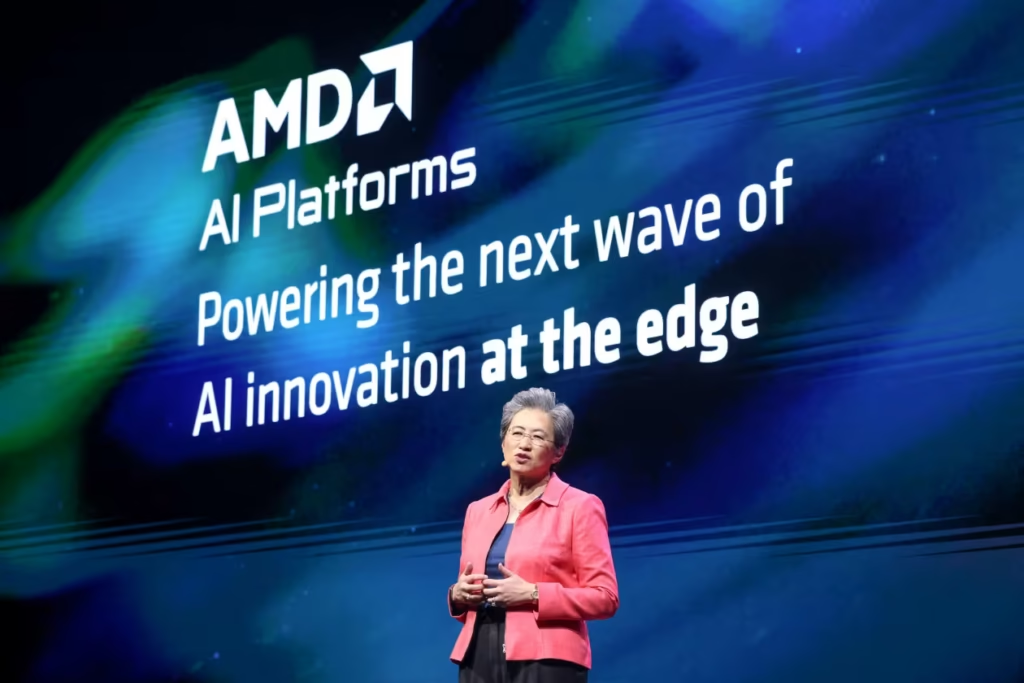

🟥 AMD – The Challenger Gaining Ground

For years, AMD (Advanced Micro Devices) played second fiddle to Nvidia. But in 2025, the story has changed. AMD’s Instinct MI300 series has given the company a real competitive edge.

🚀 MI300: AMD’s Big Bet on AI

AMD’s MI300A and MI300X are designed for massive AI workloads.

They combine CPU and GPU in a single package, improving memory efficiency and reducing latency.

Key features:

- 128GB HBM3 memory (faster and more efficient than traditional GPU memory)

- 4.8 TB/s bandwidth

- Optimized for training LLMs (Large Language Models)

These chips are already being adopted by Microsoft Azure, Meta, and Oracle Cloud — major wins that signal AMD’s serious AI ambitions.

💪 AMD’s Strengths

- Competitive pricing compared to Nvidia

- Strong partnerships with cloud platforms

- Hybrid architecture (CPU + GPU synergy)

⚙️ Challenges

- Smaller AI software ecosystem (vs Nvidia CUDA)

- Slower adoption among smaller AI startups

- Still building brand recognition in AI research community

🔍 Where AMD Wins

AMD might not beat Nvidia on raw power, but it’s leading in efficiency and cost-performance balance — crucial factors for widespread AI adoption.

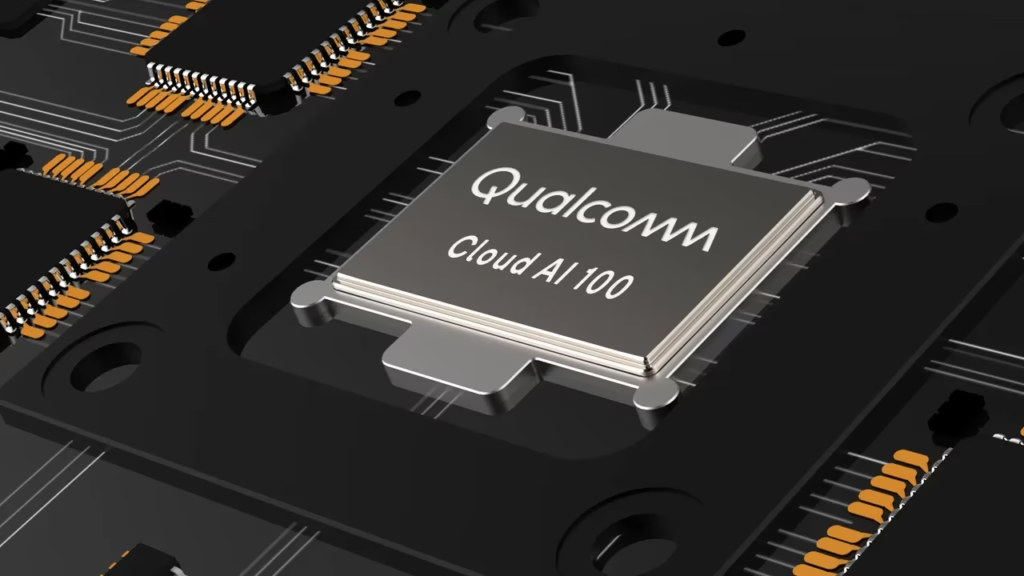

🟦 Qualcomm – The Edge AI Innovator

While Nvidia and AMD dominate the data center side, Qualcomm is quietly leading the Edge AI revolution.

📱 Snapdragon X and NPU Revolution

Qualcomm’s Snapdragon X Elite chips, built with the Oryon CPU and Hexagon NPU, power next-gen AI laptops and smartphones.

These processors deliver on-device AI performance that doesn’t rely on cloud computing — perfect for privacy and speed.

🧠 Qualcomm’s AI Advantage

- Strong presence in smartphones, wearables, and IoT

- Focus on low-power AI inference

- Partnerships with Microsoft for Copilot+ PCs

For example, new AI PCs running Windows 11 with Snapdragon X Elite can run AI models locally — such as image generation, summarization, and translation — without needing a GPU cloud.

⚙️ Challenges

- Limited in high-end data center AI

- Competing against Apple Silicon for mobile AI

- Needs broader developer adoption

🌍 Where Qualcomm Wins

Qualcomm isn’t aiming to beat Nvidia — it’s defining a different AI battleground: personal and edge devices.

As AI moves closer to the user, Qualcomm’s energy-efficient chips will play a huge role in AI democratization.

🧩 The Big Picture: Different Battlefields

| Company | Focus Area | Strength | Weakness |

|---|---|---|---|

| Nvidia | Data centers, training | Software ecosystem, performance | Expensive, supply limits |

| AMD | Data centers, cloud AI | Efficiency, hybrid design | Smaller ecosystem |

| Qualcomm | Edge AI, consumer devices | Power efficiency, partnerships | Limited enterprise AI presence |

🧰 AI Software Ecosystem: The Secret Battlefield

Hardware is only half the story. In AI, software defines success.

| Company | Software Advantage | Ecosystem Strength |

|---|---|---|

| Nvidia | CUDA, TensorRT, DGX Cloud | Mature, developer-focused |

| AMD | ROCm, Open ML Stack | Growing, open-source friendly |

| Qualcomm | AI Engine SDK | Optimized for mobile AI |

🔮 Future Trends to Watch in 2025–2026

- AI PC Era Begins:

With Snapdragon X Elite and Apple M4 chips, AI features will move offline — changing how users interact with devices. - AI Training Costs Drop:

As AMD and emerging startups enter the market, the cost to train large models will fall. - Specialized AI Chips Rise:

Expect custom chips from Google (TPUs), Amazon (Trainium), and Tesla (Dojo) to challenge the big three. - Energy Efficiency Becomes Key:

The next big leap won’t just be speed — it’ll be sustainability. Companies investing in green AI hardware will lead long-term.

🌍 The Global Chip Manufacturing Race

Another layer to the AI chip battle is manufacturing control.

Most AI chips are fabricated by TSMC (Taiwan Semiconductor Manufacturing Company) — a single company producing chips for Nvidia, AMD, and Qualcomm alike.

However, geopolitical tensions and supply chain disruptions have sparked an AI chip sovereignty movement.

- The U.S. CHIPS Act (2024) incentivizes companies to manufacture locally.

- India, Japan, and Europe are investing billions in semiconductor self-reliance.

This global race will determine who controls AI at its core — the silicon.

In the long run, countries with chip independence will hold the real power.

💡 The Energy Efficiency War

AI models consume massive electricity — training GPT-4 reportedly used enough power to run a small city for days.

That’s why energy efficiency is becoming the next big battleground.

- Nvidia Blackwell GPUs claim 30% less energy per training cycle.

- AMD MI300 delivers high performance at reduced TDP.

- Qualcomm NPUs achieve AI on-device computing with milliwatts.

The companies that master green AI hardware will not only dominate the market but also align with global sustainability goals.

My Opinion: Who Will Lead the Future AI Chip Race?

After analyzing all three — here’s my honest opinion as someone who follows AI trends closely:

- Nvidia will remain the leader in data center AI and large model training through 2025. Their combination of hardware and CUDA software gives them an edge that’s hard to beat.

- AMD will become the strongest challenger, especially for enterprises and cloud providers who want high performance at lower cost.

- Qualcomm, meanwhile, will dominate the edge AI market — bringing powerful AI to phones, laptops, and IoT devices.

If we look at the overall AI ecosystem, Nvidia still wears the crown — but AMD and Qualcomm are building the future for accessibility and innovation.

By 2026, we might not have a single “winner”, but rather a balanced landscape where each company owns a piece of the AI future.