Discover what context engineering is, why it matters in AI/LLM systems, and how you can apply it in practice. Learn strategies, use cases, and best practices to get more consistent, accurate, and aligned AI outputs.

Understanding Context Engineering — The Future Beyond Prompt Crafting

In the evolving world of AI and large language models (LLMs), context engineering is emerging as a foundational practice. It goes beyond just writing better prompts—it’s about designing the environment in which an AI operates. In this article, we’ll explore what context engineering is, why it matters, how you can implement it, and what challenges to watch out for.

What Is Context Engineering?

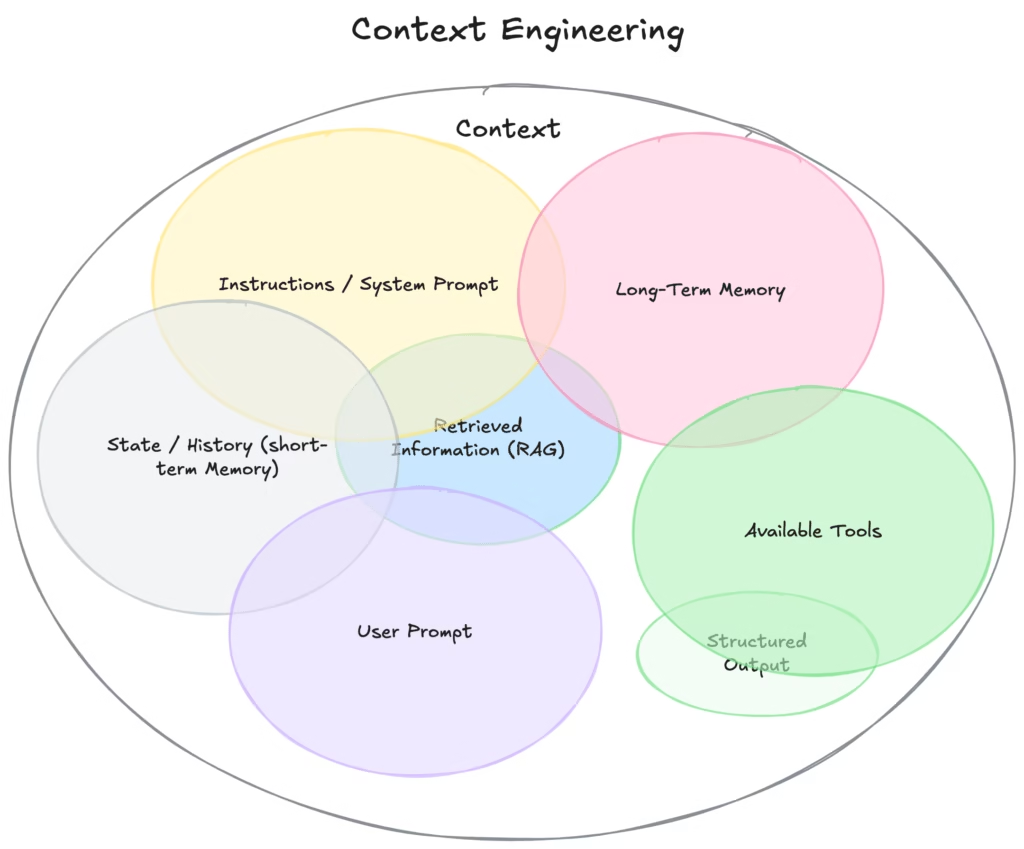

Context engineering involves structuring, curating, and managing all the relevant information an AI model needs to make high-quality decisions or outputs. It includes:

- background knowledge, domain rules, constraints

- memory or history from earlier interactions

- external tools, databases, APIs

- formatting, roles, personas, tone

- dynamic retrieval and adaptation mechanisms

In short: instead of just giving the model a prompt, you surround that prompt with the right scaffolding so the model “knows” what it’s doing.

This concept expands on and subsumes prompt engineering. Prompt engineering deals with formulating the instructions; context engineering determines what context is available to the model and how it is delivered.

Why Context Engineering Matters

Here are some of the key reasons why context engineering is becoming indispensable:

- Reduced hallucinations / errors

By grounding the model with accurate context (documents, rules, constraints), the risk of fabricating irrelevant or false content drops. - Consistency & reliability

In multi-step tasks or agent workflows, context helps keep the model “on track” across steps. - Scalability

For systems deployed at scale, you can modularize context, reuse context components, and centralize updates. - Better integration with external data/tools

Context engineering allows you to feed in live data from APIs, databases, memory stores, etc. - Governance, alignment, and guardrails

Including policy, compliance, or domain constraints in context ensures AI outputs remain safe and aligned with objectives.

As studies and industry practitioners note, many failures in AI agents stem not from model shortcomings but from poor context design.

Key Techniques & Strategies in Context Engineering

Here are several approaches commonly used:

- Write / Supplement Context

Add explicit background, definitions, domain rules, personas, examples.

(E.g. “You are a finance analyst. Below is company data and constraints…”) - Select / Retrieve Context

Use retrieval systems (RAG, semantic search) to fetch the most relevant documents or memory snippets.

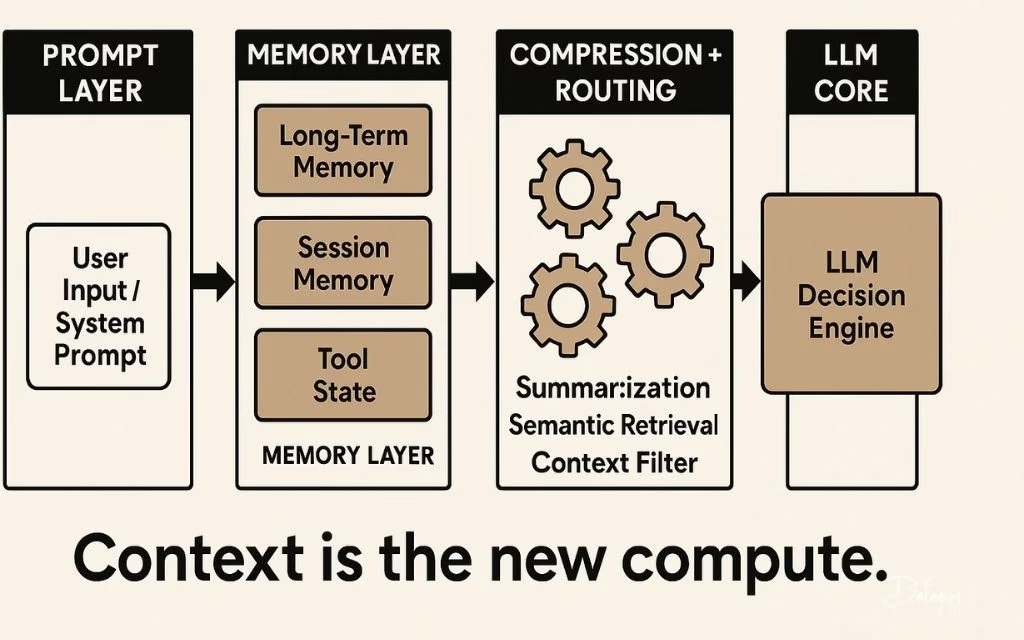

(Avoid flooding the model with everything.) - Compress / Summarize Context

Use summaries, embeddings, or abstraction so you keep essential info while reducing token cost. - Isolate / Modularize Context

Partition context into modules (e.g. user profile, domain rules, past conversation) to avoid “context bleeding” or contamination.

These strategies align with what recent analyses describe as four primary approaches in context engineering.

Also, techniques like prompt chaining (breaking a complex task into smaller subtasks) help maintain clarity and control across steps.

Practical Steps to Implement Context Engineering

If you want to adopt context engineering in a project, here’s a rough roadmap:

- Audit your information & knowledge sources

List internal documents, policies, domain rules, user logs, APIs, external data you may need. - Segment & tag your context

Divide the context into coherent, manageable modules with metadata (e.g. “user history,” “product specs,” “compliance rules”). - Design retrieval & ranking logic

Decide how and when to fetch relevant context (semantic search, vector embeddings, heuristics). - Decide how to feed context to the model

- Prepend to prompt

- Use tool / function calls

- Use memory or scratchpads

- Use layered or cascading context

- Iterate & refine

Monitor where the model fails or drifts. Add, remove, or reformulate context modules. Use feedback loops. - Governance & update cycles

Ensure context is updated (e.g. new rules, data), version controlled, audited for biases or security

Use Cases & Examples

- Customer Support Systems: context includes product manuals, prior tickets, user profile, escalation guidelines.

- Code Assistants / Developers Tools: context includes codebase files, API docs, coding conventions, prior commits.

- Domain Experts / Finance AI: context includes market data, regulatory rules, company financials, historical trends.

- Conversational Agents: context includes conversation history, user preferences, personality, domain limits.

In each case, the difference between a “good” and “great” system often comes down to how well context is engineered.

Challenges & Pitfalls

While context engineering is powerful, it comes with difficulties:

- Token or context window limits

LLMs can only take so much input. You must manage tradeoffs on what to include or compress. - Context staleness

If your context becomes outdated, errors or inconsistencies will creep in. - Context contamination / drift

Irrelevant or conflicting context can confuse the model. - Performance & latency

Fetching, summarizing, and processing context adds overhead. - Debugging complexity

When outputs are wrong, diagnosing whether the prompt or the context caused it can be tricky.

Future Trends & Opportunities

- Standard protocols / formats for context

For example, the Model Context Protocol (MCP) is emerging as a way to standardize how context and tools connect. - Smarter memory systems

More adaptive, long-term memories that feed only essentials into prompts. - Automated context generation & optimization

Tools that automatically suggest which context pieces to include, drop, or reformat. - Context-aware agents & orchestration

Multiple agents may share or pass context, creating more sophisticated workflows. - Stronger governance, auditing, and ethical layers

As context becomes more powerful, maintaining fairness, transparency, and alignment becomes critical.

Conclusion

Context engineering is not just a buzzword—it’s fast becoming the backbone of effective and safe AI systems. While prompts remain important, context engineering gives you control, consistency, and robustness at scale. If you’re building with LLMs or AI agents, mastering context engineering will help you go from ad hoc hacks to principled, repeatable systems.